This guide is for teams that already run their own RL trainer (PyTorch FSDP, Megatron, a custom Ray cluster, etc.) and want Fireworks for large-scale inference during rollouts.Documentation Index

Fetch the complete documentation index at: https://docs.fireworks.ai/llms.txt

Use this file to discover all available pages before exploring further.

Is this the right guide?

| Path | You own | Fireworks owns |

|---|---|---|

| This guide (BYOT rollouts) | Trainer, rewards, environment, checkpoint upload cadence | Hot-load deployment, distributed weight swap, inference, KV cache across rollouts |

| Training API | Training logic (recipes or SDK) | GPUs, trainer lifecycle, often FW_HOSTED bucket |

| Managed RFT | Dataset and evaluator | End-to-end hosted RL |

- Disaggregated: Your trainer and rollout cluster can run in different regions or clouds; deployments can span multiple regions to pool capacity.

- Full-parameter scale: Full (non-LoRA) tuning for large models supported on Fireworks inference shapes.

- Fast checkpoint transfer: Lossless compressed incremental snapshots (

arc_v2, typically 20×+ compression) over standard object storage—no special RDMA networking between trainer and inference. - Async / off-policy friendly: Background download during rollouts; configurable swap semantics similar in spirit to PipelineRL—see checkpoint-swap behavior.

Placeholders

Reuse these values in every command below:| Placeholder | Example |

|---|---|

<account_id> | my-team |

<model_id> | qwen3-30b-a3b |

<deployment_id> | rl-rollout-prod |

<fireworks_api_key> | From API keys |

<your_bucket> / <your_upload_path> | Parent prefix configured on the deployment (no trailing slash) |

<checkpoint_id> | Snapshot directory name, e.g. version_001 (no slashes) |

Prerequisites

Complete this checklist before creating a deployment:- Fireworks account and API key — create a key and set

export FIREWORKS_API_KEY="<key>". - Account ID — In the dashboard, open your account settings or any resource URL; the account slug is the segment after

/accounts/(for exampleaccounts/<account_id>/...). - Feature enablement — Request external-bucket hot-load for RL rollouts on account

<account_id>, including your bucket provider (S3,GCS/gs://, orNEBIUS). - Object storage read access for Fireworks — Fireworks needs read-only access to the bucket prefix you will pass as

--hot-load-bucket-url. At enablement, Fireworks shares the IAM principal to grant access. Typical setup:- Amazon S3: Grant the Fireworks principal

s3:GetObject(ands3:ListBucketon the prefix) ons3://<your_bucket>/<your_upload_path>/*. - Google Cloud Storage: Grant

roles/storage.objectVieweron the bucket or prefix to the Fireworks service account provided at onboarding. - Nebius / MinIO: Equivalent read-only credentials or access key scoped to the upload prefix.

- Amazon S3: Grant the Fireworks principal

firectlinstalled — See firectl.- Base model and deployment shape — An RL-capable shape for your model (GPU count, precision). If you omit

--deployment-shape,firectlprompts you to pick one interactively.

Architecture

You own: trainer, reward shaping, checkpoint cadence, rollout orchestration. Fireworks owns: hot-load logistics, distributed weight swap, inference serving, KV cache across rollouts.End-to-end loop

- Create a hot-load deployment.

- Upload and hot-load an initial full snapshot.

- Run rollouts against that snapshot.

- For each training step: upload and hot-load the next incremental snapshot (see Incremental snapshots).

- Run rollouts again.

- Every 20th or 30th step, publish a full snapshot instead of an incremental one. If the incremental chain fails, fall back to a full snapshot.

Quickstart checklist

Use this table for your first rollout end-to-end:| Step | Action | Done when |

|---|---|---|

| 1 | Create hot-load deployment | firectl deployment get <deployment_id> shows a healthy deployment |

| 2 | Upload full HF snapshot | All files exist under .../<checkpoint_id>/ in object storage |

| 3 | POST signal snapshot | HTTP 200 |

| 4 | GET poll status | Every replica has readiness: true and current_snapshot_identity matches your identity |

| 5 | Run rollouts | Chat/completions returns tokens |

1. Create a hot-load deployment

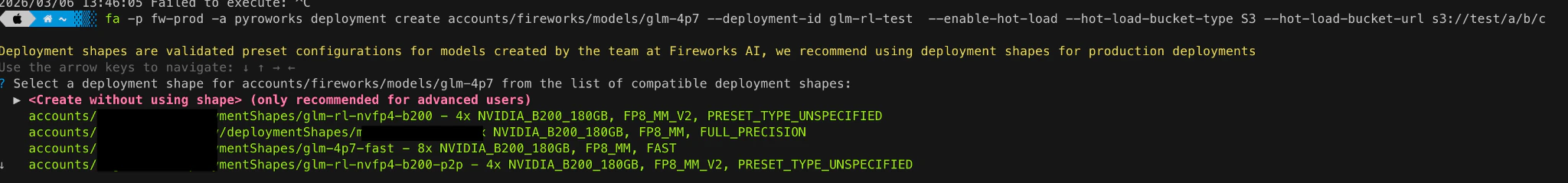

Create the deployment that will serve rollouts. During preview,--enable-hot-load flags may be hidden from CLI help but can still be passed explicitly.

Flags

--deployment-shape— Optional. If omitted,firectlprompts you to pick one.--hot-load-bucket-type—MINIO,S3,NEBIUS, orFW_HOSTED. This guide focuses on external buckets (S3,gs://, etc.).FW_HOSTEDis for Fireworks-managed trainers.--hot-load-bucket-url— Required when--enable-hot-loadis set. Examples:s3://mybucket/path,gs://mybucket/path. No trailing slash. This is the parent prefix; each snapshot is a subdirectory named byidentity(see snapshot layout).--hot-load-transition-type—ASYNC(recommended for RL) orSYNC. Defaults toASYNCwhen hot load is enabled. See checkpoint-swap behavior.--region— Where the deployment runs (for exampleUS_OHIO_1,US_VIRGINIA_1). Keep the trainer upload path geographically close to the bucket and deployment.

2. Upload and hot-load an initial full snapshot

Upload a full HuggingFace-format checkpoint, then signal Fireworks to load it.Snapshot layout

Place each snapshot under its own subdirectory. Theidentity you signal in the API must match the directory name (a single path segment—no slashes):

identity/<checkpoint_id>— Any opaque string (for exampleversion_001orstep_00100).- Format — Same layout as the base model on HuggingFace:

config.json, tokenizer files, and safetensors weights. No tensor-parallel sharding in uploaded files. - File size — Split weights into multiple

.safetensorsfiles, each under about 5 GB. Group weights by layer when possible; putting one layer per file minimizes load time.

Signal and poll

Use the Hot-load API below with{ "identity": "<checkpoint_id>" } and poll until all replicas are ready.

Hot-load API

All hot-load requests use these headers:| Header | Value |

|---|---|

Authorization | Bearer <fireworks_api_key> |

fireworks-model | accounts/<account_id>/models/<model_id> |

fireworks-deployment | accounts/<account_id>/deployments/<deployment_id> |

Content-Type | application/json |

| Operation | Method | URL |

|---|---|---|

| Signal snapshot ready | POST | https://api.fireworks.ai/hot_load/v1/models/hot_load |

| Poll load status | GET | https://api.fireworks.ai/hot_load/v1/models/hot_load |

| Per-file hint (optional) | POST | https://api.fireworks.ai/hot_load/v1/models/hot_load/hint |

Signal snapshot ready

Full snapshot body:checksum_format are documented in Incremental snapshots.

Snapshot directory name under the configured bucket prefix. Must not contain

/.Required for incremental snapshots. Includes

previous_snapshot_identity, compression_format (arc_v2), and checksum_format (alder32). See the incremental snapshots guide.Prompt-cache policy after the swap:

all (default), none, or new_session. See prompt cache reset behavior.Top-level

config.json fields to ignore during snapshot validation. Only use for known-safe metadata fields.Poll load status

Poll until every replica hasreadiness: true and current_snapshot_identity equals the identity you signaled.

When to start rollouts

- Default (on-policy): Wait until all replicas report readiness on the new

identity. - Off-policy / higher utilization: You may start sending rollouts when a subset of replicas is ready—inspect each entry in

replicasin theGETresponse. Stale-policy rollouts are expected; use async transition mode and monitor policy version in streaming responses (see Policy version in responses).

3. Run rollouts

Call the OpenAI-compatible inference API. For multi-turn RL, set session headers so KV cache stays on one replica:Steady-state training loop

After the first full snapshot:- Intermediate steps — Build and upload an incremental snapshot (

arc_v2), signal withincremental_snapshot_metadata, poll until ready, then run rollouts. - Every 20th or 30th step — Publish a new full snapshot for faster recovery and chain reset.

- On failure — Fall back to a full snapshot; see Ledger & debugging.

Numerics alignment

For best training–inference alignment:- Match quantization / precision between trainer checkpoints and the deployment shape (work with Fireworks if you need a custom shape).

- Measure logprob divergence between trainer forward passes and rollout inference on the same tokens.

- For MoE models, use Router Replay (R3) during rollouts—see MoE Router Replay.

Next steps

Incremental snapshots

Build ARC2 deltas, per-file hints, and incremental signal bodies.

Ledger & debugging

Inspect snapshot history, reset the ledger, and reason about request behavior during weight swaps.

Inference for RL rollouts

Session affinity headers, policy version in streams, weight-swap behavior, and MoE Router Replay (R3).

Fireworks-hosted trainer

The alternative path where Fireworks runs the trainer through the Training API.